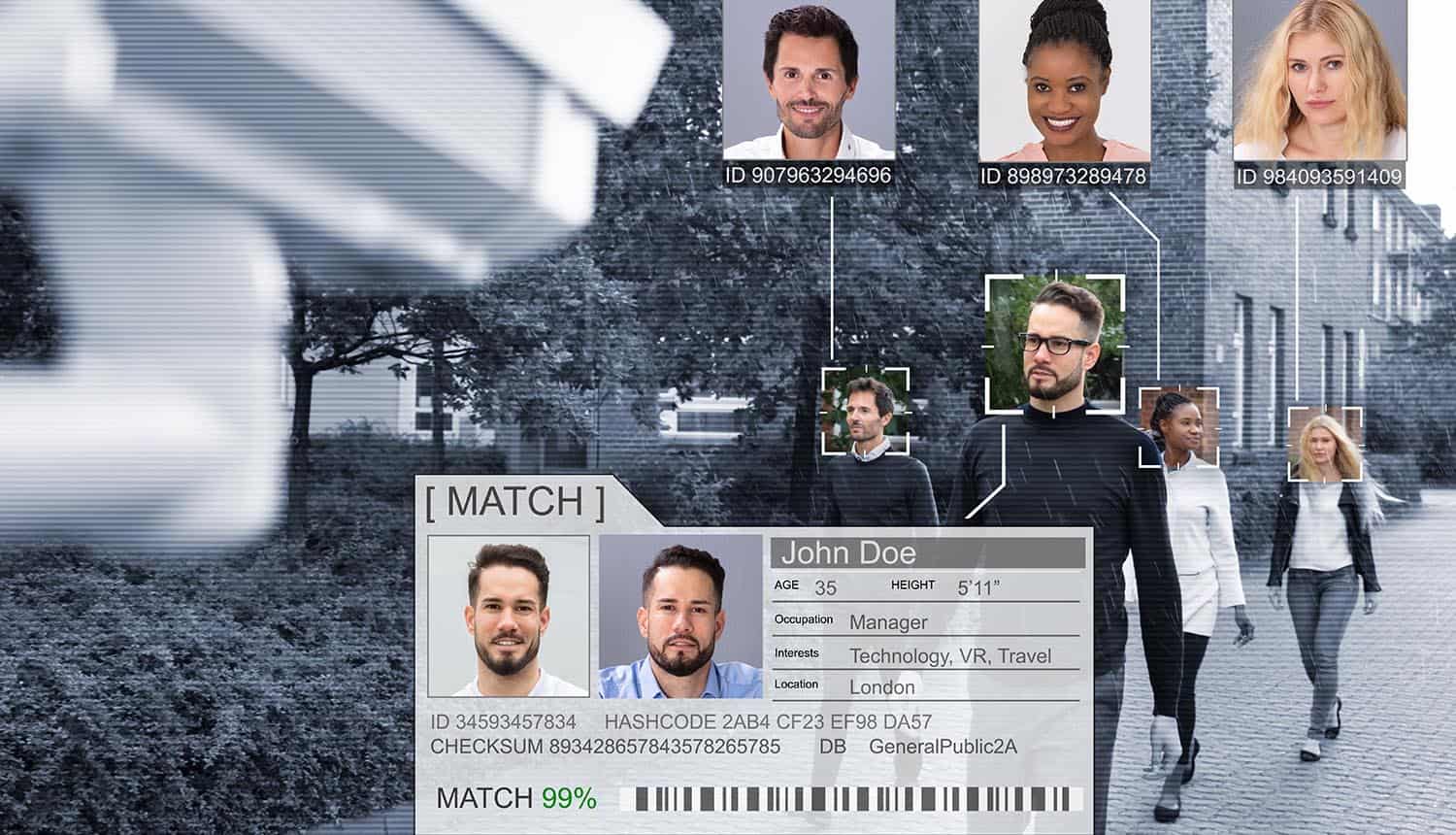

Facial recognition surveillance technology is a hot-button issue that pulls together several major social concerns; privacy, profiling, authoritarianism and free expression. As both camera hardware and facial recognition software technologies advance to the point that a person can be added to a database and then located in a crowd in under 10 minutes, serious questions emerge about the net social value of their use.

Prominent political voices, such as presidential candidates Bernie Sanders and Tom Steyer, have called for it to be banned from use in law enforcement. Some American cities have taken it upon themselves to pre-emptively ban it at the local level: San Francisco, Oakland, Berkeley and the Boston suburb of Somerville.

Concerns over the potential for abuse have driven all of this, and that is also what has motivated 40 groups headed by the Electronic Privacy Information Center (EPIC) to draft a letter recommending that federal agencies suspend the use of facial recognition surveillance systems.

The facial recognition surveillance debate

The EPIC coalition addressed the letter to the Privacy and Civil Liberties Oversight Board (PCLOB), an organization formed in 2004 to advise the executive branch on balancing anti-terrorism and law enforcement policies with privacy and civil liberties concerns. PCLOB has been influential in the past; after the Edward Snowden leaks revealed the privacy violations of the NSA’s warrantless surveillance programs, a PCLOB follow-up study found that the NSA’s program had not contributed to the disruption of any terrorist attacks and recommended that the bulk data collection program be terminated. This led to the narrowing of metadata collection to investigation subjects only in 2015.

The EPIC letter cites several specific concerns, including:

- The use of the Clearview AI facial recognition app, which runs on the personal phones of police officers. Some agencies in New York City have been using the app without any public oversight, and some individual officers have been using it even though it is not authorized by their department.

- The greatly increased rate of “false positive” matches when scanning people of color, particularly African-Americans and Asians, as revealed by a recent National Institute of Science and Technology study.

- The deployment of facial recognition surveillance in authoritarian regimes around the world, particularly the use of it by the Chinese government in response to the Hong Kong protests.

This debate is sometimes framed as a question of accuracy, but the authors of the EPIC letter are clear that improved accuracy would not change their view. The group points to the European Union proposal to ban all use of facial recognition in public spaces for five years while safeguards are put into place as a potential solution.

The letter is co-signed by advocacy groups including the Electronic Freedom Foundation, the Consumer Federation of America, the Freedom of the Press Foundation, Media Alliance, the National LGBTQ Task Force and Patient Privacy Rights.

There is no question that facial recognition surveillance technology is a potent crime deterrent, but critics feel the cost of it is too high. The central issue is lack of oversight – authorities have to be trusted to not abuse it, yet high-profile cases of abuse and “scope creep” are not difficult to find throughout the world. The ideal facial recognition surveillance technology is used only to identify bad actors in limited, targeted applications and does not report on or store the data of anyone else; what happens in reality is that the technology makes inexplicable appearances at protests, in shopping districts and at football matches. There is also the question of private companies holding these gigantic databases of facial images and how they will be used and secured.

China is the most extreme example of facial recognition surveillance tech as dystopian method of population control. The scope of implementation there is beyond that of any other country, but it’s also that the government actively suppresses speech it considers “socially unharmonious” along with the proposed “social credit” system to rate the behavior and compliance of citizens. Some citizens are already blacklisted from basic services such as public transportation and taking out bank loans based on the early version of this system, which uses facial recognition surveillance to monitor actions in public places.

Potential impact of facial recognition surveillance

This coalition joins a growing number of voices calling for both states and the federal government to pump the brakes on facial recognition surveillance systems.

The House of Representatives Oversight Committee recently held the third in a series of hearings on facial recognition technology and data protection. These hearings have been punctuated by bipartisan questioning of the public value and necessity of facial recognition surveillance measures, even if they can be made to be perfectly accurate.

Law enforcement supporters of the technology claim that it would be “negligent” for them to not make use of facial recognition systems, and that it is only used for investigative leads and not arrests. But this argument does not address the full range of potential abuse of biometric data. The issue will have to be taken up by Congress at some point; legislation that curtails the use of facial recognition surveillance has already been drafted.